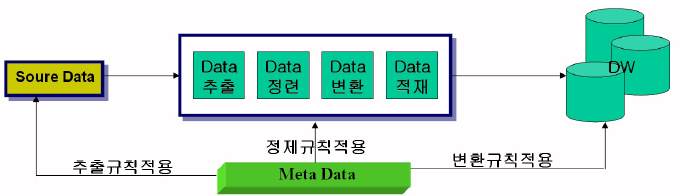

This step involves mapping the data to the appropriate fields in the target system and ensuring that the data is loaded correctly. The final step of the ETL process is to load the transformed data into a target database or data warehouse. It also involves data enrichment, where new data is added to existing data to provide more context and insights. Transformations can be performed in various ways, such as filtering, sorting, aggregating, joining, and splitting data.ĭata transformation is critical to ensure data quality, consistency, and accuracy. This step involves cleaning the data, removing duplicates, and converting it into a consistent format. The second step of the ETL process is to transform the extracted data into a format that is suitable for analysis. Delta extraction extracts changes that have occurred since the last extraction, making it a faster and more efficient method of extraction. Full extraction involves extracting all the data from the source system, while incremental extraction only extracts new or modified data since the last extraction. This step involves connecting to the source system, identifying the data to be extracted, and pulling the data from the source system.ĭata extraction can be performed in different ways, such as full extraction, incremental extraction, or delta extraction. Data can be extracted from different types of sources, including structured, semi-structured, and unstructured data. The first step of the ETL process is to extract data from various sources such as databases, applications, and files.

By consolidating data into a single source of truth, organizations can improve data quality and make better-informed decisions. If multiple versions of the same data exist in different places, it can lead to confusion, mistakes, and wasted time. Having a single source of truth is important because it helps prevent inconsistencies and errors in data analysis and reporting. By extracting data from multiple sources, transforming it to meet certain standards, and loading it into a single destination system, organizations can ensure that all data is consistent and accurate. It enables organizations to consolidate data from multiple sources, transform it into a consistent format, and load it into a target system where it can be used for analysis and reporting.ĮTL processes are often used to create and maintain a single source of truth. The ETL process is critical for data integration, data warehousing, and business intelligence.

It is a process used to extract data from various sources, transform it into a format suitable for analysis, and load it into a target database or data warehouse. Reverse ETL – What’s the Difference?ĮTL was introduced as a process for integrating and loading data for computation and analysis, eventually becoming the primary method to process data for data warehousing projects.ĮTL stands for Extract, Transform, and Load. Developers and data engineers have built modern data stacks that make data more accessible to business users and by effectively activating data insights, businesses can stay competitive, drive growth, and improve customer experiences. Activation is a critical component of the data lifecycle, enabling businesses to unlock the full potential of their data and drive meaningful outcomes. The process of taking insights or information gleaned from data analysis and using it to drive action or decision-making is called activation. This can be due to a lack of technical expertise or resources to manage and maintain data systems, as well as siloed data that is difficult to access and integrate. Businesses are generating more data than ever before, but they often struggle to tap into the full potential of their data, leaving valuable insights untapped and opportunities unrealized. “Data is the new oil” implies that data is a valuable resource that can drive innovation, growth, and competitive advantage for businesses.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed